I have a Master’s Degree cum laude in Theoretical Physics. When I started my journey into data science, I figured out how useful it is for this kind of job.

My background

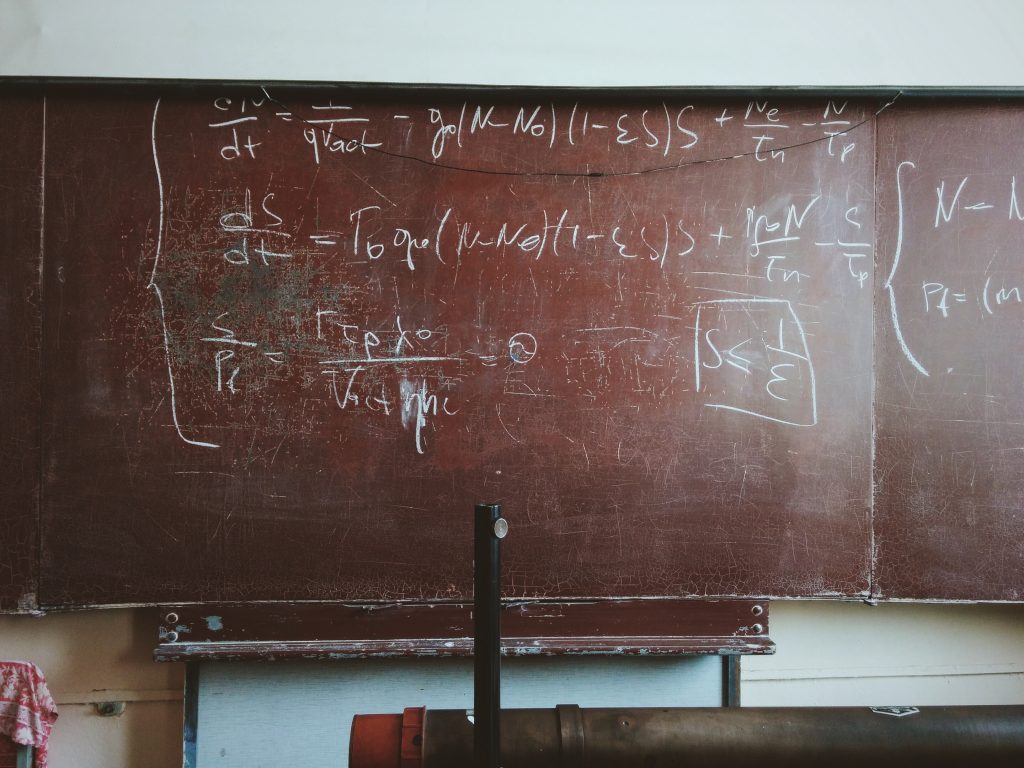

I’ve studied Physics at “Sapienza” University Of Rome and attended my Bachelor’s Degree in 2008. Then I started studying for my Master’s Degree in Theoretical Physics, which I obtained in 2010. My focus is the theory of disordered systems and complexity.

Theoretical physics has always been my love since my BS. I hated going to the laboratory and working with lasers and old computers that neither had an updated Windows 98 system able to read USB pen drives (I’m not joking). Instead, I liked programming and software development laboratories. I remember with love a course about Computational Physics in which I learned Monte Carlo simulations and optimization algorithms. Everything was done in pure C language (Python wasn’t as famous as it is now and Matlab was expensive). It was a lot of fun for me. Unfortunately, it wasn’t for most of my colleagues.

Then I studied other programming languages by myself, like R and Python. I remember the transition from Python 2 to Python 3 and how hard it was.

I can say that those 5 years have been stimulating and wonderful. Here are some things I have learned that I used later in my job.

The scientific approach

During my academic studies, I have been trained to have a strong scientific approach to problems. Find the cause and remove it. It’s very common in software programming and in science and it becomes important even in Data Science. Trying to extract information from data is, actually, a very hard problem you have to face backward. You get the problem, then go back to its cause to find a solution. It’s always done this way and Data Science exploits this approach strongly.

Don’t be afraid of approximations

Not all the solutions must be exact. Physics has taught me that approximations are well accepted if you can control them and can give clear reasons for their need. In science, there is never enough time to get the best results possible, so scientists often use approximations in order to publish some partial results while they keep working to better solutions. Data Science is very similar to this way to work. You always have to approximate something (remove that variable, simplify that target and so on). If you look for perfection, you’ll never get anything good, while somebody else will make money with an approximate and quicker solution. Don’t be afraid of approximations. Instead, use them as a storytelling tool. “We start with this approximation and here’s the result, then we move to this other approximation and see what happens”. This is a good way to perform an analysis because approximations can give you a clearer overview of what happens and how to design the next steps. Physics has taught me that approximations are acceptable as long as you can control their error. Remember: you accept the risk of the approximation, so you’ll have to manage it.

The basic statistics tools

In the first year of my BS, I have learned the most common statistical tools to analyze data. Probability distributions, hypothesis tests and standard errors. I’ll never focus enough on the need for the calculation of standard errors. Physicists are hated by anybody because they focus on the errors in the measures and that’s correct because a measure without an error estimate doesn’t give us any information. Anyway, most of the statistical tools I’ve written about in my articles during the years came from the first year of my BS. Only the bootstrap came during the third year and the stochastic processes came during the second year of my MS. Physicists live with data and by analyzing them, so it’s the first thing they teach you. Even theoretical physicist has to analyze data because they work with Monte Carlo simulations, which are simulated experiments. So, Physics has given me the correct statistical tools to analyze each kind of data.

Data is everything

Professional Data Scientists know that data is everything and that algorithms aren’t so important if compared with data quality. When you perform an experiment in a laboratory, data returned by that experiment are the bible and must be respected. You cannot perform any analysis on the noise, instead you must extract signal from the noise and it’s the most difficult task in data analysis. Physics taught me how to respect data and that’s a fundamental skill for a data scientist.

So, here are the reasons why Physics has taught me how to become a better Data Scientist. Of course, Physics is not for everybody and is not a necessary skill, but I think that it can really be helpful for starting this wonderful career.