The Levene test for variance is a statistical test that is used to determine whether or not the variances of two or more groups are equal. This test is often used in experimental design to ensure that the groups being compared are similar in terms of their variability. In this post, I will discuss the benefits of the Levene test and provide an example of how to use it in Python to compare the variance of a sample drawn from a normal distribution and a sample drawn from a uniform distribution.

Benefits of the Levene Test

One of the main benefits of the Levene test is that it can be used with data that is not normally distributed. Unlike other tests, such as the F-test, the Levene test is not affected by non-normal data and can still provide accurate results. This makes it an important tool for experimental design and data analysis, as it allows us to make more accurate conclusions about our data and make better decisions in our research.

Additionally, the Levene test is also robust to outliers and skewness in the data, making it a suitable choice for datasets that may have extreme values. It can also be applied to both continuous and categorical data, making it a versatile tool for different types of data analysis. Furthermore, it is a non-parametric test which means it doesn’t assume anything about the underlying distribution of the data, this makes it a good alternative to other tests that do assume normality. In summary, the Levene test for variance is a robust and versatile tool that can be used to determine whether the variances of two or more groups are equal, even when the data is not normally distributed, making it an important tool for experimental design and data analysis.

Example in Python

To demonstrate the use of the Levene test, let’s consider an example where we want to compare the variance of a sample drawn from a normal distribution and a sample drawn from a uniform distribution. In this example, we will use the Python programming language and the scipy.stats library to perform the test.

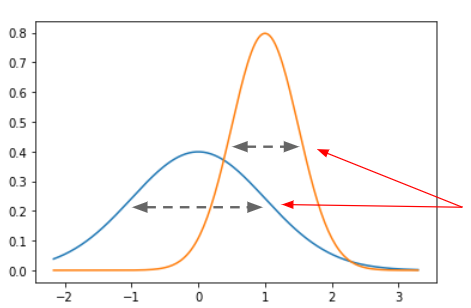

First, let’s generate the two samples. We will use the numpy library to generate a sample of 100 values from a normal distribution with a mean of 0 and a standard deviation of 1. We will also generate a sample of 100 values from a uniform distribution with a minimum value of 0 and a maximum value of 1.

import numpy as np

np.random.seed(0)

normal_sample = np.random.normal(0, 1, 100)

uniform_sample = np.random.uniform(0, 1, 100)Next, we will use the scipy.stats library to perform the Levene test. The levene() function takes two arguments: the first is the first sample, and the second is the second sample.

from scipy.stats import levene

levene_test = levene(normal_sample, uniform_sample)The levene_test variable now contains the results of the test. The p-value of the test is stored in the pvalue attribute of the levene_test object. If the p-value is less than 0.05, we can conclude that the variances of the two samples are not equal.

levene_test

# LeveneResult(statistic=79.25451220839427, pvalue=3.504206996054385e-16)As expected, the variances can be considered different.

Let’s now see what happens if the normal sample has the same variance as the uniform sample (which is 1/12).

second_normal_sample = np.random.normal(size=100,scale=np.sqrt(1/12))

levene(second_normal_sample, uniform_sample)

# LeveneResult(statistic=1.9316828189869752, pvalue=0.16613509863895887)

The test has been pretty able to detect the similarity between the variances.

Conclusions

In conclusion, the Levene test for variance is an important tool for experimental design and data analysis. It allows us to determine whether or not the variances of two or more groups are equal and can be used with data that is not normally distributed. With the help of the Levene test, we can make more accurate conclusions about our data and make better decisions in our research.